Standard of Care

I have seen good organizations and bad organizations get hacked and spill data. Determined criminals focused on achieving their objectives will eventually find a way to get in and steal what they are after. But there are some agencies who are simply not even trying to protect their data. There are some agencies who are resisting taking the steps to provide basic cyber hygiene.

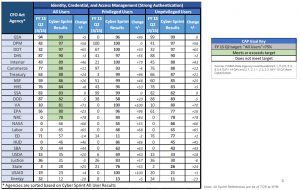

When I see agencies (State Department, Energy, and USAID below) who aren’t implementing PIV cards, I take note. I mean, Homeland Security Presidential Directive-12 has only been around since 2004. And here we are 12 years later and you think that you can make an argument that this is an unfunded mandate? Brother, if there is any requirement that should be baked into your base appropriation, HSPD-12 is it. (Of course, Section 508 and IPv6 are a couple of others too, but I digress.) It is unconscionable that there are agencies who need to be told to use their PIV cards to access an agency network. This is one of those basic fundamental things that is so easy to implement and makes such a big difference in whether you will be hacked.

https://www.performance.gov/downloadpdf?file=Sprint%20Results%20Report%20FY15_Q2.pdf 8

https://www.performance.gov/downloadpdf?file=Sprint%20Results%20Report%20FY15_Q2.pdf 8

The reason I say that is because IDs and passwords can be stolen relatively easily. You can have a key logger. You could make a fake website and trick someone into inputting their ID and pw. But when you go to the PIV card, that is a different scenario. That scenario is something you have (the card) and something you know (the PIN). A hacker might be able to figure out my PIN, but the chances of my physical card winding up in China or Russia or any other threat out there, very low.

Another issue, Windows XP. There are still a bunch of agencies that are still running Windows XP and 2003. Those versions of Windows have been out of service for years now. That means that you aren’t getting patches for those operating systems. I would say that logging into the Internet, within 10 minutes your machine will be compromised. They simply aren’t secure.

How much of your Internet traffic isn’t going through Einstein? I’m looking at you, BLS and other statistical agencies. Do you think for a second that DHS wants to screw with who and how you are getting data to deliver the GDP report? DHS doesn’t care about who and how you get data to generate your report. But I know that they care a lot about IP addresses of bad guys, and of email messages that contain attachments that we know to contain malware.

https://www.performance.gov/downloadpdf?file=FY15%20Q3%20Cybersecurity%20FINAL_4.pdf 9

https://www.performance.gov/downloadpdf?file=FY15%20Q3%20Cybersecurity%20FINAL_4.pdf 9

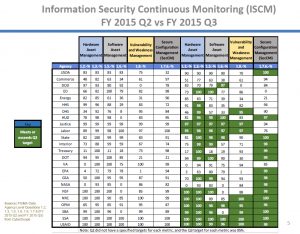

Then don’t forget about asset management and patching. There is no excuse for your agency to not be completely green in area. The part that is most concerning to me is the “Vulnerability and Weakness Management” column. Look at that data. That means that there are agencies (DoD, EPA, DOT) that are just letting critical vulnerabilities slide. That is the same as putting up a billboard in the dark Web and saying “Hack Here!”

While getting patches deployed should be a relatively straightforward and easy thing to do, agencies struggle with it too frequently. We are so consumed with breaking something else by deploying the patch that we forget why it is so important to deploy the patch. This is like not locking the front door to your house because you are afraid you might scratch the key. Actually, this is more like not locking the door to your house because you might scratch the key, while looking at a suspicious van parked across the street with four people wearing ski masks. How stupid do you have to be?

https://www.performance.gov/downloadpdf?file=FY15%20Q3%20Cybersecurity%20FINAL_4.pdf 10

https://www.performance.gov/downloadpdf?file=FY15%20Q3%20Cybersecurity%20FINAL_4.pdf 10

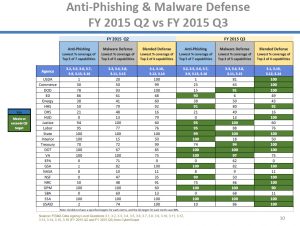

But if you thought I was mad about deploying patches, prepare yourself, I will lose my mind for these agencies that can’t even get their anti-phishing and malware defenses up. Preventing those messages from getting into inboxes and, when a dumb employee installs malware, allowing it to go all E.T. and call home is…There is no other word for it, dereliction of duty, incompetence, anything on that spectrum. So look at this table. There are only four agencies that are covered for all three columns, so your agency likely has room for improvement.

My point in this section is that there are certain fundamental things that we can all agree comprise basic cyber hygiene. When those basic things aren’t done, we must be specific about that. If a doctor didn’t deliver the basic standard of care, then we would sue him or her for malpractice. When a layer or accountant does that we similarly sue him or her. But CIOs aren’t yet held to this strict professional standard. They should be. The first key to this is FITARA. CIOs need to be empowered so that they are in charge of delivering a standard set of security capabilities across the entire organization. That means every component (DHS), every bureau (Labor), every mode (Transportation), every agency (USDA), every opdiv (HHS), every lab (Energy and NASA). It is no longer acceptable for a CIO to say that he or she can’t be held accountable for security for every single piece of the enterprise.

The problem with security is that the defenses need to be perfect every time while the attackers only need to be successful just one time. But nobody is perfect. So the standard of care shouldn’t be calibrated to perfection, just to a level that sets the nominal expectations of the people in the organization and the people who have entrusted their data to the organization.

My recommended standard of care:

- 100 percent of privileged users use PIV cards.

- 85 percent of unprivileged users use PIV cards.

- Average time to address critical vulnerabilities is ? 15 days.

- At least 95 percent of agency personnel complete cyber training each year.

- At least 100 percent of agency executives complete cyber training each year.

- 100 percent of the workstations are under asset management.

- At least 95 percent of software deployed to workstations is under asset management.

- 100 percent of security incidents are reported in 24 hours or less to US CERT.

- Common indicators of compromise are reported in real time.

- 100 percent of the traffic goes through a Trusted Internet Connection.

- 100 percent of the traffic goes through Einstein 3a.

- Average POA&M duration is ? 1 month.

- Physical testing of the contingency plans occurs at least once per year for all critical systems.

- Penetration testing for all critical systems occurs at least once per year.

- There is a rapid response remediation team that has a high degree of training and experience.

I don’t know whether this is a comprehensive standard of care or not. This is where I would put the standard today. Are there omissions, definitely. But the only way we are going to collectively raise our game is by agreeing on the standard of care. What is the minimal standard that everyone must be held accountable to? If you asked me today, I would come up with this list. Ask me again in a week I might change it a little. My goal here is only to try to write it down and get people thinking about it.

If we don’t take the time to consider this issue and get it right, it looks like the courts will decide it for us. There is a case in which a casino is suing a security firm, Trustwave, for not effectively evicting the attackers. A second firm, FireEye (Mandiant), came in and identified breach points that the first company did not remediate. This is related to my last point about a rapid response remediation team. This case will prove to be interesting because if Trustwave is negligent then there must be some standard of care that forms the basis for that claim.

CSAM

It really pains me how few people know what CSAM is. CSAM is the Computer Security Assessment and Management (SCAM) system operated by the Department of Justice. There is a good PowerPoint that goes through what CSAM is and what it does from the previous administration, http://georgewbush-whitehouse.archives.gov/omb/egov/documents/CSAM_DOJ_Briefing_Day1.ppt.

This system was created to be the hub for the security information about the systems in the government. This is supposed to be the system that keeps the PIAs and System Security Plans. It also keeps a tally of the applicability of the controls (NIST 800-53) that apply and how they are implemented. It also keeps the POA&Ms on the system. In the next chapter I’m going to rail on FedRAMP and I will get into a deep discussion about the specific controls and how they should inherit their way up.

The key here is the agencies need to define those system boundaries (think about platforms from my discussion from the EA chapter). Those system boundaries should also marry up to the business cases for budgeting/CPIC purposes. One of the biggest problems that agencies make for themselves is that the boundaries are different. They try to have one set of boundaries and definitions from a security perspective and a different set of boundaries from a budgeting perspective. I hope spiders bite your eyes out that is so stupid. Nobody knows what the hell you are talking about when you want to discuss systems at those agencies. We need one set of boundaries and definitions and those definitions must persist across security, budget, performance, and all other disciplines.

I swore I would never use words like taxonomy in this thing. But once you get everyone recognizing consistent boundaries then you can start to measure them consistently and each of the different disciplines will have a recognition that the system they are talking about is, in fact, the same.

F or agency implementation of CSAM to be effective, they need to go back to the system boundaries and make sure that they are recognizing them at the right level. If you asked me to describe it I would say that I have network capabilities at the lowest level, above that you have your data centers and cloud capabilities, then systems and frameworks sitting on top of that, and applications and websites on top of that. My stack is to the right.

or agency implementation of CSAM to be effective, they need to go back to the system boundaries and make sure that they are recognizing them at the right level. If you asked me to describe it I would say that I have network capabilities at the lowest level, above that you have your data centers and cloud capabilities, then systems and frameworks sitting on top of that, and applications and websites on top of that. My stack is to the right.

Remember, I would advocate a very low number of networks for an agency, like one. As such you should have one, or no more than two, networks that support a small number of data centers or cloud infrastructure. On top of that you have your platforms, like your SharePoint, your LAMP (as I discussed from the EA chapter), your SAP, SalesForce, AWS, etc. Built on top of those platforms will be many applications that meet specific business and mission needs.

If you draw your system boundaries at the platform layer then those platforms will be inheriting all the physical controls from the data center and network layers. They will have a very specific and nuanced set of controls that they will hold at their platform layer and that will allow you to differentiate their security classifications. This is important.

At one of the agencies in which I worked we had three primary platforms. We had our SharePoint for very low security and collaborations. We had our LAMP for more complicated processing and higher security. We had a SAP platform for our financial management applications. Each of these platforms filled a very specific need within the organization and when we had new requirements, we could go back to these platforms to identify which one was best suited to meet our revised needs. From a security perspective, this worked well because we could have this “stack” conversation and know how a change at the platform level would impact security issues with a number of applications. From a budgeting perspective we would have discrete security costs at the platform level, and that is where we would invest time for C&A (now A&A) actives. From a contingency planning perspective, you reconstitute the platform and then the applications in priority order. It just makes sense from so many different ways, I can’t understand why anyone would draw their boundaries differently.

But reducing complexity and standardizing in this area makes sense because then CSAM could be more helpful than it is today. If we were reporting the data to CSAM more consistently then when there is an incident, CSAM could help us to understand the exposure more efficiently than we currently do. Let’s say we have a system that has been in production for years. On Tuesday the person manning the help desk receives an email from a user that identifies an exploit in which any person with access to the system could manipulate the controls and see the details of any other user of the system including their Social Security number. So the help desk person notifies the government project manager who notifies the bureau CIO and CISO who notifies the departmental CISO. Eventually someone creates an incident that goes to US CERT.

US CERT gets it and they aren’t familiar with XYZ system, so they treat it like the 1,000 other incidents that come in that day. The help desk person knows that this is a big deal system and that the risk will reverberate across the government because of all the users of the system. But the incident ticket doesn’t help anyone to differentiate between this ticket and the thousand other ones. If only there was a system in which we could have efficient access to the PIA and the SSP so that we could have context.

A system like CSAM should be the first place people look to better understand the context and exposure presented by an incident in a reported system. But today it is treated like a compliance system to check if you completed the documentation. It should be more than just a compliance system it should be a management support system that helps us to better understand the magnitude of the impact from an incident with this system.

This scenario actually happened. The System for Award Management (SAM) had an exploit that could have revealed personally identifiable information 11. GSA knew that this would be a big deal, but how could US CERT? They couldn’t, not without a system like CSAM that would help them to understand the context of the affected system. Thus my strong argument is to require all agencies to consistently input their data into CSAM and allow support organizations like US CERT, NCATS and OMB to have broad “read” access to be better prepared to respond when there is an incident.