Requirements Backlog

There are several important things that must be in place for this process to work and be effective. The first is that you must have a requirements backlog. This does not need to be a sophisticated thing. A simple spreadsheet will work. All changes must go onto one single spreadsheet. That means all changes, defects, bugs, new ideas, everything. If you try to track new items in one list and defects in another, and security changes in another you will drive yourself crazy trying to manage all the different lists.

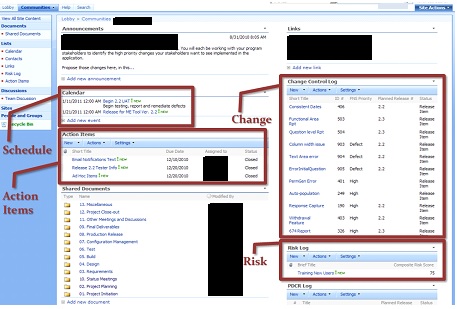

The screenshot below was from an actual project dashboard. I was proud of the way that we handled the change portion of the project. You can see that there is a separate SharePoint list for change and that some of the changes are defects, high, and some items that haven’t been prioritized yet. You can tell that this project is gearing up for release 2.2, but we have a couple items that are already earmarked for release 2.3. Sorry about the redactions, but if you knew who I am and I say something unpopular, I might not be able to keep working to fix these issues from inside the government.

The really cool part was that you could click on any single item in the change control and open that record to see a description of what is to be changed and any continuing discussion among the developers and the business owners. Somewhat related to the change control list is the Action Item List to its immediate left. Whenever we encountered anything that could be a blocker or an area in which we needed more information or help, we would create those action items and assign them a due date so that we answered the questions that were necessary to get stuff done.

Even though I am pretty strongly against heavy formal processes for documentation and deliverables, I recognize that they are still necessary. In the shared documents you will find all of the materials that were needed to understand and achieve consensus among the different stakeholders. This was important to the team because we were previously relying on email for that document repository and version control proved to be a problem.

Make Change a Commodity

In the commercial sector I think it is probably easier to jump to an agile development paradigm; they don’t have the Federal Acquisition Regulations (FAR). But we do. We have to commit to everything in a work statement (SOW, PWS, or SOO). In addition, we have to pick a contract type and how we are going to monitor the contractor’s performance.

I can’t cite it because I think Defense Acquisition University removed it, but this image came from them and it helps to identify the contract type that is best suited for the activity you need to perform. On the left side you have the fixed price types and the right side has the cost types. I generally only use fixed price contracts because I have been burned by a time and materials (T&M) contract in my past. The red row is probably why they deprecated the image. If you think about it, T&M is really a terrible contract type for IT development projects. People will look at a chart like this and say, but we don’t have a good handle on the requirements and we don’t know how much effort we need to invest. Those issues push you over to the cost side of the spectrum. But remember if we aren’t buying the product and instead are buying the process, then I would argue that we have a very good idea of how much effort we are going to need.

I can’t cite it because I think Defense Acquisition University removed it, but this image came from them and it helps to identify the contract type that is best suited for the activity you need to perform. On the left side you have the fixed price types and the right side has the cost types. I generally only use fixed price contracts because I have been burned by a time and materials (T&M) contract in my past. The red row is probably why they deprecated the image. If you think about it, T&M is really a terrible contract type for IT development projects. People will look at a chart like this and say, but we don’t have a good handle on the requirements and we don’t know how much effort we need to invest. Those issues push you over to the cost side of the spectrum. But remember if we aren’t buying the product and instead are buying the process, then I would argue that we have a very good idea of how much effort we are going to need.

It all comes down to how you describe the work inside of the iterations. The table below contains the development portion of my most recent work statement. This is what the government is paying for, process.

| Development. Build Iterations are exactly what they seem to be, they are fully functional iterations of the application based on the previously agreed scope. The scope (features, story points, change enhancements) for a particular build iteration is identified in the detailed project plan or the Development Plan. The rationale for segmenting the functionality in this manner is that the Government will not wait until the end of a project to test the functionality and offer course corrections. This will lead to two distinct positive impacts; defects are identified earlier in the process when they are less onerous to remove and the earned value of the overall effort will be realized over the duration of the project instead of at the end.

The Contractor will deliver Iterations of functionality or features for review. Each iteration will have facilitated a Walkthrough meeting in which the functionality that has been delivered is demonstrated to the user community or stakeholders. The Contractor shall work to correct all errors (defects) and increase usability. Comments that are delivered within the specified timeframe of the Iteration Walkthrough shall be included in the subsequent iteration or the product delivered for User Acceptance Testing in the event it is the final iteration for that Phase.

During this Development phase, the stakeholders and users will execute test plans against the delivered functionality. The Development Team will test the functionality prior to delivering an Iteration and deliver a Test Results and Evaluation Report (TRER) to the PM. The TRER will identify all failed test steps in any Test Case. These issues will be identified during the Walkthrough.

Code and features developed by the Contractor and delivered to the Agency shall conform to the Secure Code Policy of the Department. The Government is not required to pay for features or deliverables that do not comply with this requirement, and/or the Government shall not pay to have non-compliant code adjusted to be in compliance with the secure code requirement.

User Acceptance Testing (UAT) is the final point in which the Government makes the decision regarding whether the functionality that has been delivered is able to be placed in the production environment. All outstanding comments to Iterations shall be addressed in the functionality delivered for UAT. The criteria for making this decision with respect to the binary decision about passing UAT will be the agreement that the Validation Approach for each requirement has been satisfied, the functionality is consistent with the templates and workflows specified and the application has no significant vulnerabilities as identified by the Information Security Office.

A Walkthrough, facilitated by the Contractor, for the stakeholders and/or users shall be required. The testing period will commence with functional testing by the business. The application will undergo penetration testing following the successful completion of functional testing. Upon successful testing completion, the Contractor shall deliver a detailed Installation and Configuration Guide that includes screen shots of each step in the process to build and configure the application in the production environment.

The Development Contractor will deliver Updated Documentation to reflect what has been developed versus what was planned. Each of the core documents will be consistent with the baseline of the release. The updated documents include the System Requirements Specification (SRS) and/or the System Design Document (SDD). Additionally, if changes are made to the Data Requirements Document (DRD) and/or the Database Specification (DB Spec.), then those artifacts will be updated by the Development Team to reflect the progressively elaborated requirements and design.

The Task Order will identify the development paradigm and how code is to be delivered to the Agency. It will identify how many iterations will be required and the duration of each iteration. |

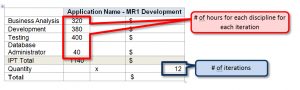

With this description of what the work is, we then must work to take the risk out of the equation for the contractors. If I was a contractor and I was going to bid on this work without any additional guidance, I would bid really high because you can fit a lot of effort into this. We can address this issue in how we order the services. The following table is what my order for this work looked like.

So on this development project if each iteration if roughly a month, I was looking for eight weeks of business analysis, nine and a half weeks of development, 10 weeks of testing and one week of database development. If you are the contractor, thinking about bidding on this work, then you look at it and think about two business analysts (also known as user experience designer), two and a half developers, two and a half testers, and part of a person focused on developing the database. That is seven dedicated people, and that is the optimal number for an agile development team.

So on this development project if each iteration if roughly a month, I was looking for eight weeks of business analysis, nine and a half weeks of development, 10 weeks of testing and one week of database development. If you are the contractor, thinking about bidding on this work, then you look at it and think about two business analysts (also known as user experience designer), two and a half developers, two and a half testers, and part of a person focused on developing the database. That is seven dedicated people, and that is the optimal number for an agile development team.

The optimal number for an agile development team is 7, +/-2. So anywhere from 5-9 people9. When I was developing this particular model, it was for the re-engineering of an application that is already in production. It was getting old and we needed to refresh it. I estimated the work to be somewhat easier than developing a brand new capability and that is why I shot a little lower on the development spectrum. The table above was what we gave to the offerors to fill out. All they had to do was assign the rates for their people in each of the disciplines. My spreadsheet calculated the cost for each labor category and the total cost for the 12 iterations of development.

Twelve iterations is somewhat interesting. I actually didn’t know how many iterations we would need. As a PM you have to think about how many times the business people are going to want to see the application during development to have confidence in the product to be released. That is what I like to say we are doing in the development process. I say that we are in the belief business. We are converting people who don’t believe in the new application into believers and evangelists, so that when we release it, they will go out in the world and tell people how great it is. In this case, I was basically saying that we would take a year (1 iteration per month, 12 total).

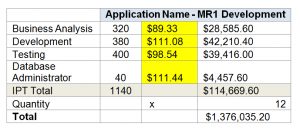

But back to commoditizing change. This table is very similar to the model I had offerors fill out. The difference is that I populated it with the rates for each discipline. To generate the rates I looked at the GSA Schedule 70 rates for several companies that have done good work and found the labor categories that fit the ones I had. You may need to read the detailed descriptions on these. The one issue to be aware of is the fact that these Schedule 70 rates are the maximum rates. Companies typically discount beyond these rates, so, if you can compare actual invoice rates, you would be better served.

But back to commoditizing change. This table is very similar to the model I had offerors fill out. The difference is that I populated it with the rates for each discipline. To generate the rates I looked at the GSA Schedule 70 rates for several companies that have done good work and found the labor categories that fit the ones I had. You may need to read the detailed descriptions on these. The one issue to be aware of is the fact that these Schedule 70 rates are the maximum rates. Companies typically discount beyond these rates, so, if you can compare actual invoice rates, you would be better served.

Then I composited these rates and used that as the rate for my model. This supported my independent government cost estimate (IGCE). So I know that each iteration will cost me about $114,669.60. What I have done here is critically important. Now I know about how much an iteration should cost. I am aiming for 12. When I get to iteration No. 10 I will probably have a good sense as to whether 12 iterations was the right number. If it wasn’t and I need more than 12, then we have made it easy to add additional ones on because we have effectively negotiated the price for an iteration. Assuming that you aren’t trying to run iterations in parallel, you should be able to send over another $114,000 and tack on another iteration, or however many you need.

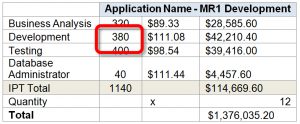

The level of effort numbers are also important. I typically benchmark on the level of developer effort. This is where the change control and the iteration come together. You can see here that for a month-long iteration we have allocated 380 hours of developer effort. I encourage everybody to identify new items on the change log. The testers, the business analysts, the government mission people, the security team, the CIO team, everybody should be pushing changes, defects, new items, and everything else to the change log. Keep in mind that when you start doing this you will collect a lot of changes, especially in the beginning. This big bunch of changes represented the pent-up demand that people had to improve the application. Also, it is a good thing. Our goal is to never actually develop and deliver on every single change. Some people are stupid and they recommend stupid changes, and stupid changes shouldn’t be implemented. But people want to have an outlet to recommend the changes that they feel strongly about. Plus we want to have the development resources working on the highest priority things all the time. If the list of changes is small, then we won’t ever know if they are working on the most important things, because they are working on everything.

When changes are added to the log, the developer and the government PM (or COR) have to review the changes and consider the amount of developer effort that would be necessary to develop the change. Thus if someone recommends that we change the label of a button from “ABC” to “XYZ,” we review the request, make sure it is clear and then we assign how much effort we think it would take to develop it. In this case, one hour. I probably wouldn’t put the change of a label for a single button as the sole change in a single request because it is pretty small. As such I would probably bundle that change with a couple other small changes to get to a more robust level of effort. You may want to develop metrics that help to manage the process. For example, I like the 4/40 rule which states that we don’t track changes that are less than four hours in effort and if you have a change that is more than 40 hours (a work week) in effort, you need to break it down into smaller changes that can be tracked and assigned separately. I don’t want to go more than one week without knowing that we are off-track on something.

My trick is that each month in status meetings (or whatever length of time your sprint it, e.g. two weeks), together as a group we review this change log and prioritize the changes for the next iteration or sprint. I may have 300 changes on the log. Of those, 250 have a developer level of effort assigned. If the release going out the door next week is Release 2.2, then we are meeting to talk about what items will be prioritized for Release 2.3, which should go to production about five weeks from now. Thus, of those 250, we are programming some of them for Release 2.3. How many get into that set depends on two things, the priority and the level of effort. Remember what we have allocated for each release is 380 hours. When we set our priorities it is likely that we will prioritize changes that accrue to 450 hours or more. This is where the government has to make the tough calls. This process forces people to actually sit down and consider the importance of their changes versus the changes recommended by other people. You have to defer changes to be considered to subsequent releases. We typically slap a “Deferred” tag on those changes so that they are considered first next month when we are programming changes for release 2.4. However, once you get the level of effort for the changes down to 380 or fewer hours, lock it in and this becomes the list of features that you will be testing for release 2.3.

When you are just starting out with this process it may be easier to start small and build momentum. When I was trying out these concepts, I started with just 120 hours. That is about three weeks of developer effort, which is essentially one person. This can still work, but keep in mind that the size of the development team must not drop below five people. So if you are going to aim low, you need to make sure that you have some really good people who can problem solve on their own stuff. When you have two or more developers, they can bounce ideas off each other and get all the benefits of co-development.