This is the second article in a series about the most important technology trends. The first article postulated that the key trend is the evolution of how we work. It discussed working like a startup and utilizing new methods of working including: agile development, open source, consumerization, continuous development/continuous integration, iterating with minimum viable products, DevOps, crowdsourcing, maker communities, and reducing wait states.

In this series, Tom Soderstrom, the IT Chief Technology and Innovation Officer of NASA’s Jet Propulsion Laboratory, discusses the future of technology: how work evolves, key technologies, and how to engage the next generation.

In this series, Tom Soderstrom, the IT Chief Technology and Innovation Officer of NASA’s Jet Propulsion Laboratory, discusses the future of technology: how work evolves, key technologies, and how to engage the next generation.

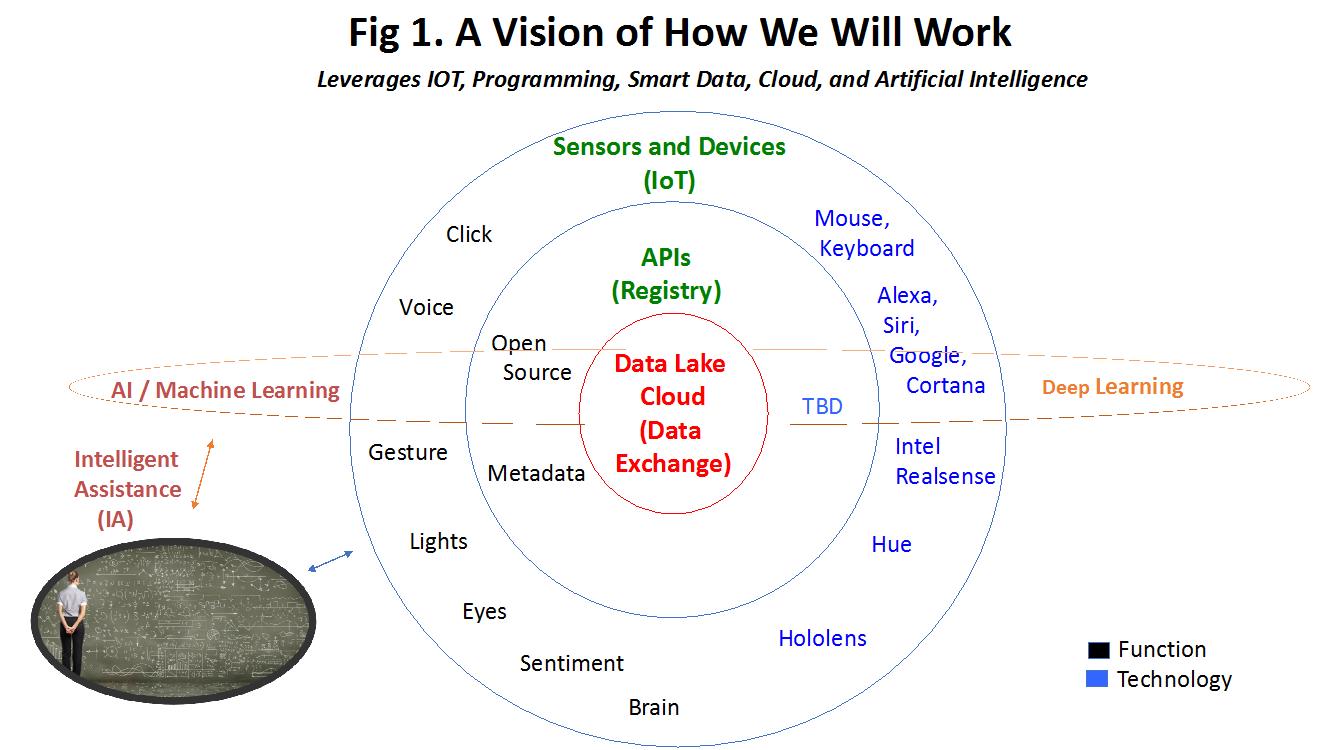

This article focuses on the key technologies that will deliver maximum benefits, especially when used together. Over the next three years, to work even faster and more effectively, we will use new natural user interfaces (NUI) to easily and seamlessly interact with previously unimaginable amounts of data in the cloud from real-time sensors created through the Internet of Things. We will make data-driven decisions in real time aided by complex algorithms that help make sense of the data through artificial intelligence (AI). And we will proactively measure everything through predictive and prescriptive analytics.

The way we interact with the computing systems will change. Today, we work using a mouse and keyboard, must have knowledge of what data we need, request permission, and then use a keyboard and mouse to access and, often painfully, combine data from different sources. It’s extremely labor intensive and, because of the many wait states, it’s too easy to lose the ever-important momentum and not deliver on time.

Tomorrow, we will simply use a NUI to query the system and receive an answer in seconds. As illustrated in Fig. 1, a scientist, engineer, programmer, or other stakeholder will simply ask a question using her or his voice, gesture to the camera, touch the data on a large touch screen, blink through the smart glasses, and/or think about the problem wearing a smart “helmet,” and the answer will appear. If we are successful, it will seem like AI magic.

Fig. 1 (Image: Tom Soderstrom)

Fig. 1 (Image: Tom Soderstrom)

So, what’s behind the scenes of this magic? The data will reside in clouds and be accessible through well-known APIs. The stakeholder’s questions will kick off a set of database queries and/or AI code that presents the solutions back to the user. Note that the system intelligently assists the human by presenting the data in the way that the user wants it and specifying the likelihood that the answer is correct. The user then chooses the action. This is the essence of Intelligent Assistance or IA.

IA will evolve to AI at the user’s desired pace. Once the user trusts the IA recommendations, she/he can choose to trust the system to implement the recommendation automatically. At that point, we have reduced an additional wait state and have evolved to true AI for that user and for that use case.

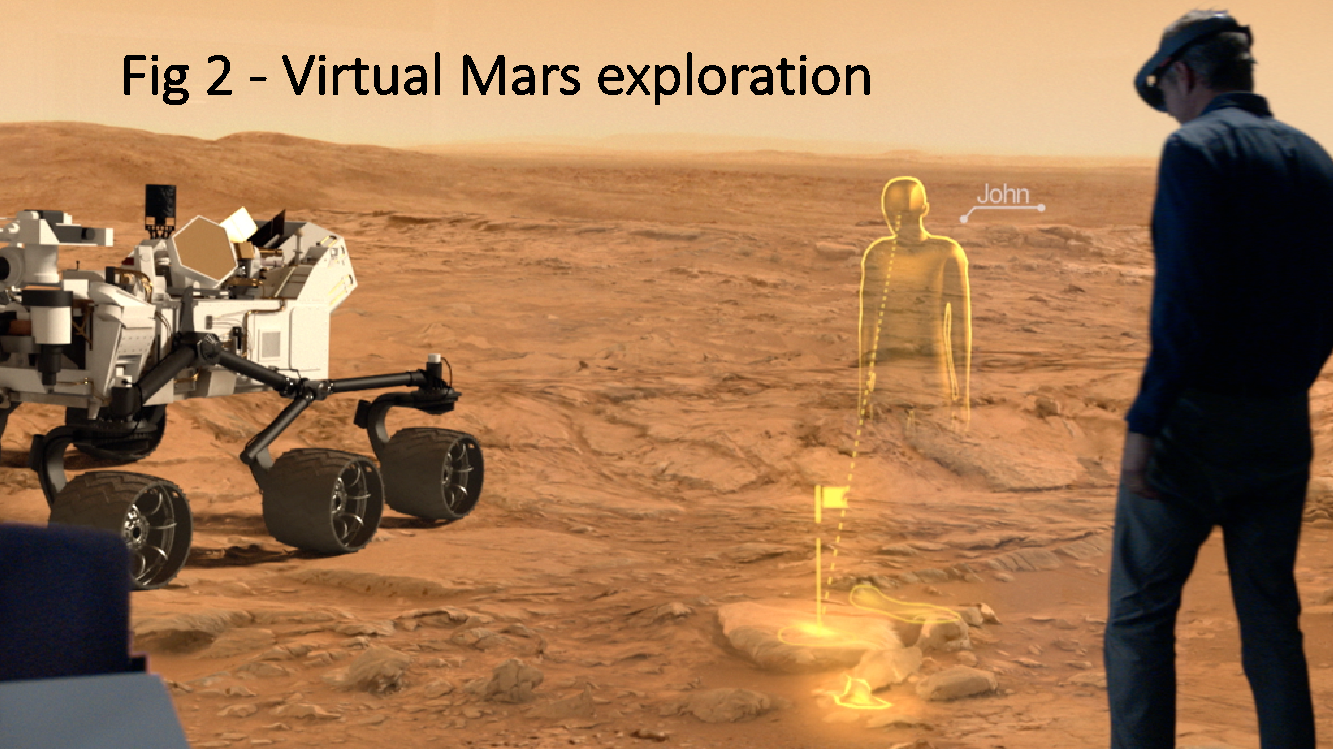

There are many current and future examples. Today, AI software called AEGIS runs on Curiosity. While Curiosity is driving on Mars, AEGIS automatically identifies interesting rocks and tells Curiosity to take photos, which are then sent to Earth. Humans can investigate them using augmented reality through Microsoft’s HoloLens Smart Glasses and ask Curiosity to turn around to drill into the rock (see Fig 2).

Fig. 2 (Image: Tom Soderstrom)

Fig. 2 (Image: Tom Soderstrom)

If this sounds too simple, we’re on the right track. AI is complicated and many people fear it. However, no one fears Alexa, Siri, or Cortana because of the apparent simplicity and because it’s simply advising the human, who takes the action. Hence the emphasis on IA.

What technologies are needed for us to execute this vision? JPL has experimented with all the below technologies and has found them both promising and useful.

- Open source and commercial analytics tools: They are readily available, inexpensive, growing, and evolving quickly.

- Cloud computing: This includes the related technologies of advanced and unlimited computing and storage, serverless computing, edge computing, containers, micro services, and API management. The list will continue to grow.

- Crowdsourcing: We will partner more within and between NASA Centers and externally to organizations such as Kaggle for data science and analytics.

- Internet of Things: IoT will allow us to automatically collect highly relevant data, gain automated situational awareness, and interact in an easy, natural way with any system.

- AI frameworks and libraries: We can choose from available open source options and run the calculations in the cloud. Examples include Tensorflow from Google, MxNet from Amazon, Cognitive Toolkit from Microsoft, and Torch, Caffe, Keras, DeepLearning4J, Theano, as well as many more.

- IA: By focusing on the user over the technology, we will employ Intelligent Assistance as a way to infuse AI while enabling human end-users to set the pace of infusion.

How can we deploy these technologies?

- Question farm: Iterate quickly with end users to find low-hanging use case.

- Experiment: Try the minimum viable product quickly with users and developers using small teams.

- Focus on the data: Ensure that the data is accessible, consumable, reusable, and understandable.

- Build in cybersecurity: Ensure that the solutions and the data are appropriately secured.

- Take the quick, easy path: If we can’t get access to the data, we will drop this experiment. Instead, we will do the prototypes/experiments that show value with minimum wait states. We will also make it easy for the users by making the solutions easy to understand, build, and (re)use.

- Measure everything: Is there enough end-user value to continue with this experiment?

- Double down: If the experiment showed value and had an impact, we’ll iterate quickly.

Whether you think this is the right approach or think that it’s complete and utter hype, we’d love to hear from you about how we can help NASA answer the big questions that affect all of humanity. Next in the series I will discuss IA and AI in more detail and would appreciate hearing about your potential or actual use cases.